Introduction

Almost four years ago, I wrote the original post to this series – Microsecond accurate NTP with a Raspberry Pi and PPS GPS. Some things have changed since then, some haven’t. PPS is still an excellent way to get a microsecond accurate Raspberry Pi. Some of the commands and configurations have changed. So let’s run through this again now in 2025. This will basically be the same post but with some updated commands and material suggestions so you can use your Raspberry Pi 5 as a nanosecond accurate PTP (precision time protocol – IEEE 1588) grandmaster.

Original Introduction

Lots of acronyms in that title. If I expand them out, it says – “microsecond accurate network time protocol with a Raspberry Pi and global positioning system pulse per second”. What it means is you can get super accurate timekeeping (1 microsecond = 0.000001 seconds) with a Raspberry Pi and a GPS receiver that spits out pulses every second. By following this guide, you will your very own Stratum 1 NTP server at home!

Why would you need time this accurate at home?

You don’t. There aren’t many applications for this level of timekeeping in general, and even fewer at home. But this blog is called Austin’s Nerdy Things so here we are. Using standard, default internet NTP these days will get your computers to within 2-4 milliseconds of actual time (1 millisecond = 0.001 seconds). Pretty much every internet connected device these days has a way to get time from the internet. PPS gets you to the next SI prefix in terms of accuracy (milli -> micro), which means 1000x more accurate timekeeping. With some other tricks, you can get into the nanosecond range (also an upcoming post topic!).

Materials Needed

- Raspberry Pi 5 – the 3’s ethernet is hung off a USB connection so while the 3 itself can get great time, it is severely limited in how accurate other machines can sync to it. Raspberry Pi 4 would work decently. But Raspberry Pi 5 supports Precision Time Protocol (PTP), which can get synchronizations down to double-digit nanoseconds. So get the 5. Ideally, your Pi isn’t doing much other than keeping time, so no need to get one with lots of memory.

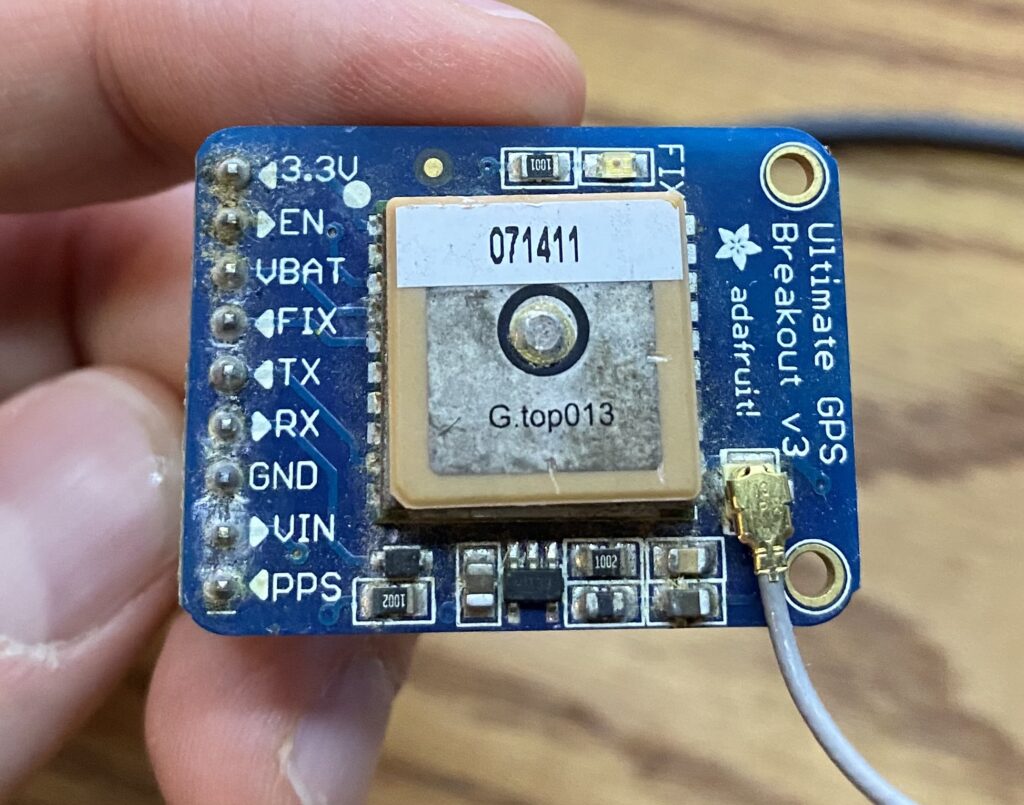

- A timing-specific GPS module – these have algorithms tuned to provide extremely precise PPS signals. For example, by default, they prefer satellites with higher elevations, and have special fixed position modes where they know they aren’t moving so they focus on providing the best time possible. u-blox devices, for instance, have a “survey-in” mode where the positions are essentially averaged over a specified amount of time and standard deviations to a singular, fixed location. Other options:

- u-blox LEA-6T

- u-blox LEA-8T

- For either of the LEA modules commonly found on eBay, you’ll also need a SMB to SMA connector and some 2.00 mm to 2.54 mm jumper wires

- (overkill) – u-blox ZED-F9T – any 9th+ gen will be expensive but have exceptional precision/accuracy (this one rated to 4 ns)

- any u-blox module ending in T (time)

- (slightly optional but much better results) – GPS antenna

- Wires to connect it all up (5 wires needed – 5V/RX/TX/GND/PPS)

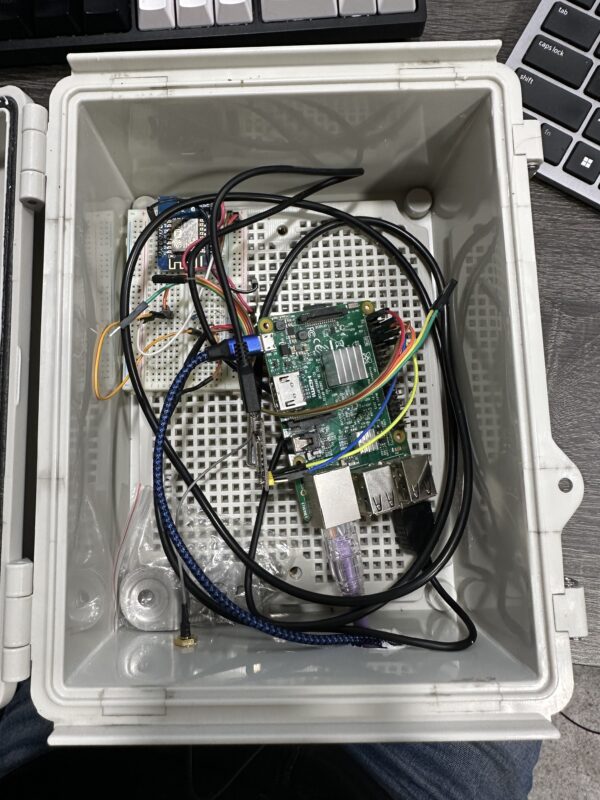

- project box to stuff it all in – Temperature stability is super important for accurate time. There is a reason some of most accurate oscillators are called oven controlled crystal oscillators (OCXO) – they are extremely stable. This box keeps airflow from minutely cooling/heating the Pi.

Steps

0 – Update your Pi and install packages

This NTP guide assumes you have a Raspberry Pi ready to go.

You should update your Pi to latest before basically any project. We will install some other packages as well. pps-tools help us check that the Pi is receiving PPS signals from the GPS module. We also need GPSd for the GPS decoding of both time and position (and for ubxtools which we will use to survey-in). I use chrony instead of NTPd because it seems to sync faster than NTPd in most instances and also handles PPS without compiling from source (the default Raspbian NTP doesn’t do PPS) Installing chrony will remove ntpd.

sudo apt update sudo apt upgrade # this isn't really necessary, maybe if you have a brand new pi # sudo rpi-update sudo apt install pps-tools gpsd gpsd-clients chrony

1 – Add GPIO and module info where needed

In /boot/firmware/config.txt (changed from last post), add ‘dtoverlay=pps-gpio,gpiopin=18’ to a new line. This is necessary for PPS. If you want to get the NMEA data from the serial line, you must also enable UART and set the initial baud rate.

########## NOTE: at some point, the config file changed from /boot/config.txt to /boot/firmware/config.txt sudo bash -c "echo '# the next 3 lines are for GPS PPS signals' >> /boot/firmware/config.txt" sudo bash -c "echo 'dtoverlay=pps-gpio,gpiopin=18' >> /boot/firmware/config.txt" sudo bash -c "echo 'enable_uart=1' >> /boot/firmware/config.txt" sudo bash -c "echo 'init_uart_baud=9600' >> /boot/firmware/config.txt"

In /etc/modules, add ‘pps-gpio’ to a new line.

sudo bash -c "echo 'pps-gpio' >> /etc/modules"

Reboot

sudo reboot

Let’s also disable a bunch of stuff we don’t need:

2 – wire up the GPS module to the Pi

Disclaimer – I am writing this guide with a combination of Raspberry Pi 4/5 and Adafruit Ultimate GPS module, but will swap out with the LEA-M8T when it arrives.

Pin connections:

- GPS PPS to RPi pin 12 (GPIO 18)

- GPS VIN to RPi pin 2 or 4

- GPS GND to RPi pin 6

- GPS RX to RPi pin 8

- GPS TX to RPi pin 10

- see 2nd picture for a visual

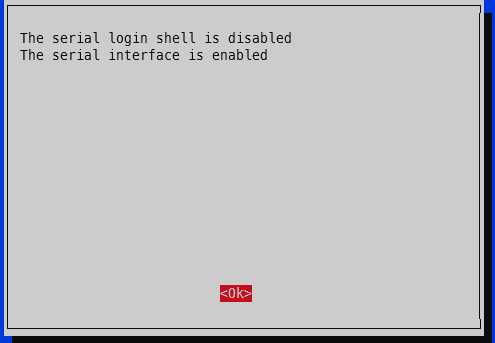

3 – enable serial hardware port

Run raspi-config -> 3 – Interface options -> I6 – Serial Port -> Would you like a login shell to be available over serial -> No. -> Would you like the serial port hardware to be enabled -> Yes.

4 – verify PPS

First, check that PPS is loaded. You should see a single line showing pps_gpio:

lsmod | grep pps

austin@raspberrypi4:~ $ lsmod | grep pps pps_gpio 12288 0

Now check for the actual PPS pulses. NOTE: you need at least 4 satellites locked on for PPS signal. The GPS module essentially has 4 unknowns – x, y, z, and time. You need three satellites minimum to solve x, y, and z and a forth for time. Exception for the timing modules – if they know their x, y, z via survey-in or fixed set location, they only need a single satellite for time!

sudo ppstest /dev/pps0

austin@raspberrypi4:~ $ sudo ppstest /dev/pps0 trying PPS source "/dev/pps0" found PPS source "/dev/pps0" ok, found 1 source(s), now start fetching data... source 0 - assert 1739485509.000083980, sequence: 100 - clear 0.000000000, sequence: 0 source 0 - assert 1739485510.000083988, sequence: 101 - clear 0.000000000, sequence: 0 source 0 - assert 1739485511.000083348, sequence: 102 - clear 0.000000000, sequence: 0 source 0 - assert 1739485512.000086343, sequence: 103 - clear 0.000000000, sequence: 0 source 0 - assert 1739485513.000086577, sequence: 104 - clear 0.000000000, sequence: 0 ^C austin@raspberrypi4:~ $

5 – change GPSd boot options to start immediately

There are a couple options we need to tweak with GPSd to ensure it is available upon boot. This isn’t strictly necessary for PPS only operation, but if you want the general NMEA time information (i.e. not just the exact second marker from PPS), this is necessary.

Edit /etc/default/gpsd:

# USB might be /dev/ttyACM0 # serial might be /dev/ttyS0 # on raspberry pi 5 with raspberry pi os based on debian 12 (bookworm) DEVICES="/dev/ttyAMA0 /dev/pps0" # -n means start without a client connection (i.e. at boot) GPSD_OPTIONS="-n" # also start in general START_DAEMON="true" # Automatically hot add/remove USB GPS devices via gpsdctl USBAUTO="true"

I’m fairly competent at using systemd and such in a Debian-based system, but there’s something about GPSd that’s a bit odd and I haven’t taken the time to figure out yet. So instead of enabling/restarting the service, reboot the whole Raspberry Pi.

sudo reboot

5 – check GPS for good measure

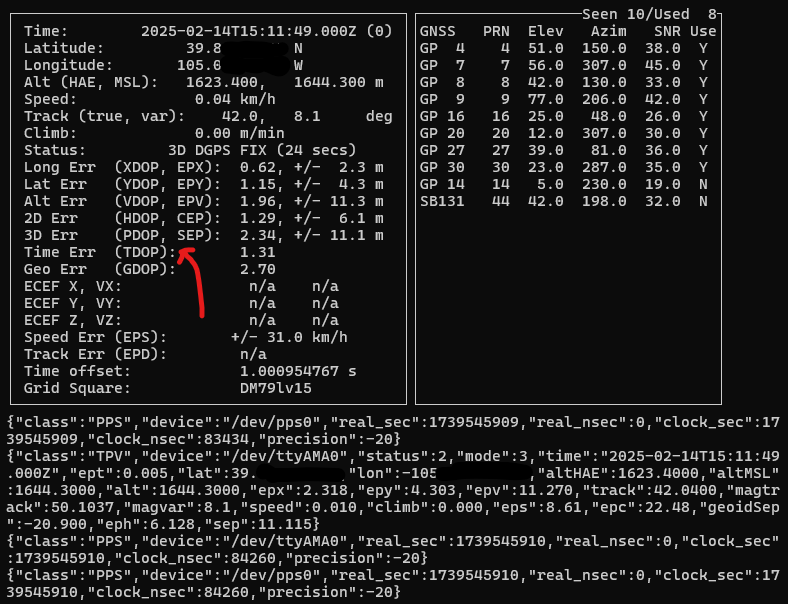

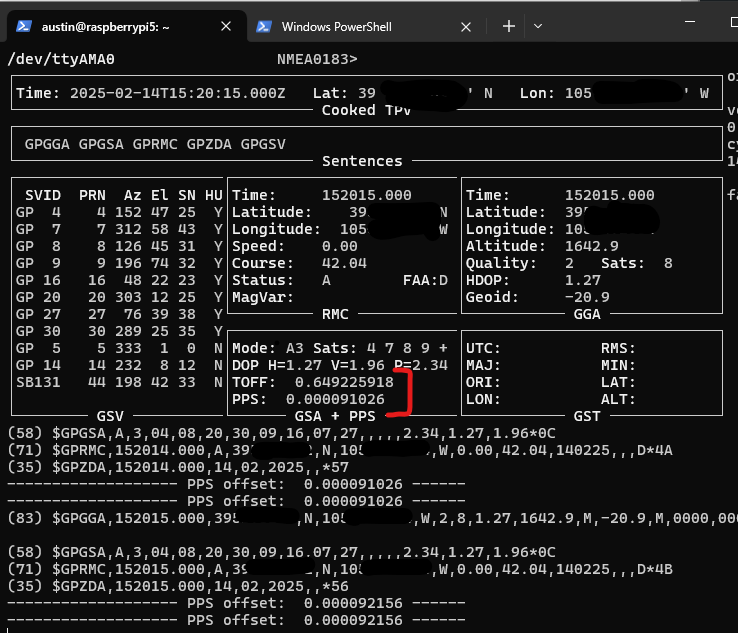

To ensure your GPS has a valid position, you can run gpsmon or cgps to check satellites and such. This check also ensures GPSd is functioning as expected. If your GPS doesn’t have a position solution, you won’t get a good time signal. If GPSd isn’t working, you won’t get any updates on the screen. The top portion will show the analyzed GPS data and the bottom portion will scroll by with the raw GPS sentences from the GPS module.

gpsmon is a bit easier to read for timing info, cgps is a bit easier to read for satellite info (and TDOP, timing dilution of precision, a measure of how accurate the GPS’s internal time determination is).

Here’s a screenshot from cgps showing the current status of my Adafruit Ultimate GPS inside my basement. There are 10 PRNs (satellites) seen, 8 used. It is showing “3D DGPS FIX”, which is the highest accuracy this module offers. The various *DOPs show the estimated errors. Official guides/docs usually say anything < 2.0 is ideal but lower is better. For reference, Arduplane (autopilot software for RC drones, planes) has a limit of 1.4 for HDOP. It will not permit takeoff with a value greater than 1.4. It is sort of a measure of how spread out the satellites are for that given measure. Evenly distributed around the sky is better for location, closer together is better for timing.

cgps

And for gpsmon, it shows both the TOFF, which is the time offset from the NMEA $GPZDA sentence (which will always come in late due to how long it takes the transmit the dozens of bytes over serial – example 79 byte sentence over 9600 bit per second link, which is super common for GPS modules = 79*(8 bits per byte + 1 start bit + 1 end bit)/9600 = 82.3 milliseconds) as well as the PPS offset. This particular setup is not actually using PPS at the moment. It also shows satellites and a few *DOPs but notably lacks TDOP.

gpsmon

Both gpsmon and cgps will stream the sentences received from the GPS module.

6 – configure chrony to use both NMEA and PPS signals

Now that we know our Raspberry Pi is receiving both the precision second marker (via PPS), as well as the time of day (TOD) data (via the NMEA $GPMRC and $GPZDA sentences), let’s set up chrony to use both sources for accurate time.

This can be done as a one step process, but it is better to gather some statistics about the delay on your own NMEA sentences. So, let’s add our reference sources and also enable logging for chrony.

In the chrony configuration file (/etc/chrony/chrony.conf), add the following near the existing server directives

# SHM refclock is shared memory driver, it is populated by GPSd and read by chrony # it is SHM 0 # refid is what we want to call this source = NMEA # offset = 0.000 means we do not yet know the delay # precision is how precise this is. not 1e-3 = 1 millisecond, so not very precision # poll 0 means poll every 2^0 seconds = 1 second poll interval # filter 3 means take the average/median (forget which) of the 3 most recent readings. NMEA can be jumpy so we're averaging here refclock SHM 0 refid NMEA offset 0.000 precision 1e-3 poll 0 filter 3 # PPS refclock is PPS specific, with /dev/pps0 being the source # refid PPS means call it the PPS source # lock NMEA means this PPS source will also lock to the NMEA source for time of day info # offset = 0.0 means no offset... this should probably always remain 0 # poll 3 = poll every 2^3=8 seconds. polling more frequently isn't necessarily better # trust means we trust this time. the NMEA will be kicked out as false ticker eventually, so we need to trust the combo refclock PPS /dev/pps0 refid PPS lock NMEA offset 0.0 poll 3 trust # also enable logging by uncommenting the logging line log tracking measurements statistics

Restart chrony

sudo systemctl restart chrony

Now let’s check to see what Chrony thinks is happening:

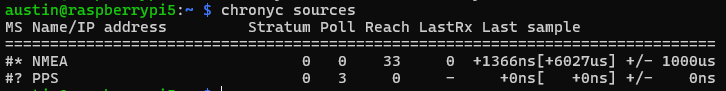

chronyc sources

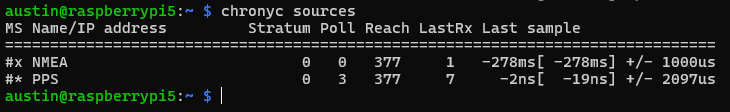

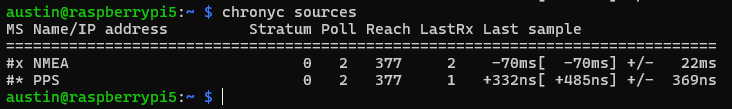

This screenshot was taken seconds after restarting chrony. The * in front of NMEA means that’s the currently selected source. This make sense since the PPS source hasn’t even been polled yet (see the 0 in the reach column). The ? in front of PPS means it isn’t sure about it yet.

Wait a minute or two and try again.

Now Chrony has selected PPS as the currently selected source with the * in front. And the NMEA source has been marked as a “false ticker” with the x in front. But since we trusted the PPS source, it’ll remain as the preferred source. Having two sources by itself is usually not advisable for using general internet NTP servers, since if they both disagree, Chrony can’t know which is right, hence >2 is recommended.

The relatively huge estimated error is because Chrony used the NMEA source first, which was quite a bit off of the PPS precise second marker (i.e. >100 millseconds off) and it takes time to average down to a more realistic number.

Since we turned on statistics, we can use that to set an exact offset for NMEA. After waiting a bit (an hour or so), you can cat /var/log/chrony/statistics.log:

austin@raspberrypi5:~ $ sudo cat /var/log/chrony/statistics.log ==================================================================================================================== Date (UTC) Time IP Address Std dev'n Est offset Offset sd Diff freq Est skew Stress Ns Bs Nr Asym ==================================================================================================================== 2025-02-14 17:38:01 NMEA 8.135e-02 -3.479e-01 3.529e-02 -5.572e-03 1.051e-02 7.6e-03 19 0 9 0.00 2025-02-14 17:38:03 NMEA 7.906e-02 -3.592e-01 3.480e-02 -5.584e-03 9.308e-03 1.1e-03 20 0 9 0.00 2025-02-14 17:38:04 NMEA 7.696e-02 -3.641e-01 3.252e-02 -5.550e-03 8.331e-03 3.6e-03 21 0 10 0.00 2025-02-14 17:38:05 NMEA 6.395e-02 -3.547e-01 2.809e-02 -4.309e-03 8.679e-03 1.5e-01 22 4 8 0.00 2025-02-14 17:38:02 PPS 7.704e-07 -1.301e-06 5.115e-07 -4.547e-08 2.001e-07 5.2e+00 15 9 5 0.00 2025-02-14 17:38:06 NMEA 3.150e-02 -2.932e-01 2.162e-02 1.269e-02 5.169e-02 2.0e+00 19 13 3 0.00 2025-02-14 17:38:08 NMEA 3.997e-02 -3.018e-01 3.025e-02 7.709e-03 6.287e-02 9.6e-02 7 1 4 0.00 2025-02-14 17:38:09 NMEA 4.024e-02 -3.000e-01 2.954e-02 6.534e-03 6.646e-02 1.9e-02 7 1 3 0.00 2025-02-14 17:38:10 NMEA 3.698e-02 -3.049e-01 2.471e-02 4.366e-03 3.917e-02 3.3e-02 7 0 5 0.00 2025-02-14 17:38:11 NMEA 3.695e-02 -3.189e-01 2.301e-02 1.258e-03 2.839e-02 7.9e-02 8 0 5 0.00 2025-02-14 17:38:13 NMEA 3.449e-02 -3.099e-01 2.239e-02 2.141e-03 1.940e-02 3.1e-02 9 0 6 0.00 2025-02-14 17:38:09 PPS 6.367e-07 -5.065e-07 3.744e-07 -6.500e-08 1.769e-07 9.9e-02 7 1 4 0.00

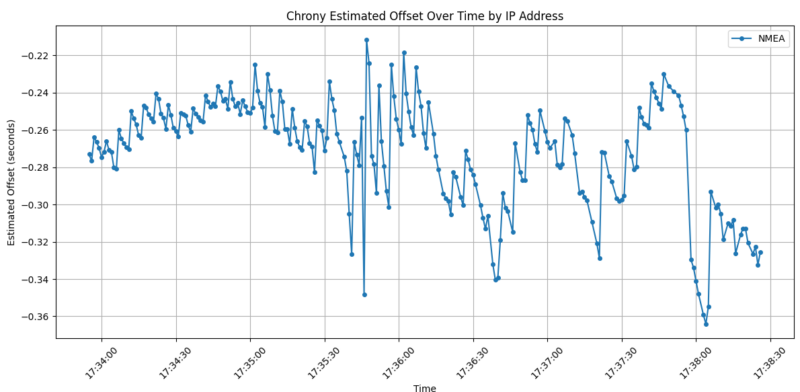

We are interested in the ‘Est offset’ (estimated offset) for the NMEA “IP Address”. Here’s a python script to run some numbers for you – just copy + paste the last 100 or so lines from the statistics.log file into a file named ‘chrony_statistics.log’ in the same directory as this python file:

import pandas as pd

import matplotlib.pyplot as plt

from datetime import datetime

def parse_chrony_stats(file_path):

"""

Parse chrony statistics log file and return a pandas DataFrame

"""

# read file contents first

with open(file_path, 'r') as f:

file_contents = f.readlines()

# for each line, if it starts with '=' or ' ', skip it

file_contents = [line for line in file_contents if not line.startswith('=') and not line.startswith(' ')]

# exclude lines that include 'PPS'

file_contents = [line for line in file_contents if 'PPS' not in line]

# Use StringIO to create a file-like object from the filtered contents

from io import StringIO

csv_data = StringIO(''.join(file_contents))

# Read the filtered data using pandas

df = pd.read_csv(csv_data,

delim_whitespace=True,

names=['Date', 'Time', 'IP_Address', 'Std_dev', 'Est_offset', 'Offset_sd',

'Diff_freq', 'Est_skew', 'Stress', 'Ns', 'Bs', 'Nr', 'Asym'])

# Combine Date and Time columns into a datetime column

df['timestamp'] = pd.to_datetime(df['Date'] + ' ' + df['Time'])

return df

def plot_est_offset(df):

"""

Create a plot of Est_offset vs time for each IP address

"""

plt.figure(figsize=(12, 6))

# Plot each IP address as a separate series

for ip in df['IP_Address'].unique():

ip_data = df[df['IP_Address'] == ip]

plt.plot(ip_data['timestamp'], ip_data['Est_offset'],

marker='o', label=ip, linestyle='-', markersize=4)

plt.xlabel('Time')

plt.ylabel('Estimated Offset (seconds)')

plt.title('Chrony Estimated Offset Over Time by IP Address')

plt.legend()

plt.grid(True)

# Rotate x-axis labels for better readability

plt.xticks(rotation=45)

# Adjust layout to prevent label cutoff

plt.tight_layout()

return plt

def analyze_chrony_stats(file_path):

"""

Main function to analyze chrony statistics

"""

# Parse the data

df = parse_chrony_stats(file_path)

# Create summary statistics

summary = {

'IP Addresses': df['IP_Address'].nunique(),

'Time Range': f"{df['timestamp'].min()} to {df['timestamp'].max()}",

'Average Est Offset by IP': df.groupby('IP_Address')['Est_offset'].mean().to_dict(),

'Max Est Offset by IP': df.groupby('IP_Address')['Est_offset'].max().to_dict(),

'Min Est Offset by IP': df.groupby('IP_Address')['Est_offset'].min().to_dict(),

'Median Est Offset by IP': df.groupby('IP_Address')['Est_offset'].median().to_dict()

}

# Create the plot

plot = plot_est_offset(df)

return df, summary, plot

# Example usage

if __name__ == "__main__":

file_path = "chrony_statistics.log" # Replace with your file path

df, summary, plot = analyze_chrony_stats(file_path)

# Print summary statistics

print("\nChrony Statistics Summary:")

print("-" * 30)

print(f"Number of IP Addresses: {summary['IP Addresses']}")

print(f"Time Range: {summary['Time Range']}")

print("\nAverage Estimated Offset by IP:")

for ip, avg in summary['Average Est Offset by IP'].items():

print(f"{ip}: {avg:.2e}")

print("\nMedian Estimated Offset by IP:")

for ip, median in summary['Median Est Offset by IP'].items():

print(f"{ip}: {median:.2e}")

# Show the plot

plt.show()

We get a pretty graph (and by pretty, I mean ugly – this is highly variable, with the slow 9600 default bits per second, the timing will actually be influenced by the number of seen/tracked satellites since we haven’t messed with what sentences are outputted) and some outputs.

And the avg/median offset:

Chrony Statistics Summary: ------------------------------ Number of IP Addresses: 1 Time Range: 2025-02-14 17:33:55 to 2025-02-14 17:38:26 Average Estimated Offset by IP: NMEA: -2.71e-01 Median Estimated Offset by IP: NMEA: -2.65e-01

So we need to pick a number here for the offset. They do not differ by much, 271 millseconds vs 265. Let’s just split the difference at 268. Very scientific. With this number, we can change the offset in the chrony config for the NMEA source. Make it positive to offset.

refclock SHM 0 refid NMEA offset 0.268 precision 1e-3 poll 0 filter 3

This usually works but I’m not getting good results so please refer to the previous post for how this should look. Turns out with the default sentences, some of the timing was attributed to 900-1000 milliseconds late, meaning the Pi was synchronizing to a full second late than actual. Couple options to resolve: increase baudrate, and reduce/eliminate unnecessary NMEA sentences. I increased the baudrate below, which won’t be necessary for any modules that have a baudrate higher than 9600 for default. If you don’t care about monitoring the GPS status, disable all sentences except for ZDA (time info).

I took an hour or so detour here to figure out how to change the baudrate on the MTK chip used in the Adafruit GPS module.

Long story short on the baudrate change:

austin@raspberrypi5:~ $ cat gps-baud-change.sh #!/bin/bash # Stop gpsd service and socket sudo systemctl stop gpsd.service gpsd.socket # Set the baud rate sudo gpsctl -f -x "$PMTK251,38400*27\r\n" /dev/ttyAMA0 # Start gpsd back up sudo systemctl start gpsd.service #gpsd -n -s 38400 /dev/ttyAMA0 /dev/pps0 sudo systemctl restart chrony

How to automate this via systemd or whatever is the topic for another post. The GPS module will keep the baudrate setting until it loses power (so it’ll persist through a Pi reboot!).

Turns out that the offset needs to be 511 milliseconds for my Pi/Adafruit GPS at 38400 bps:

austin@raspberrypi5:~ $ sudo cat /etc/chrony/chrony.conf refclock SHM 0 refid NMEA offset 0.511 precision 1e-3 poll 2 refclock PPS /dev/pps0 refid PPS lock NMEA poll 2 trust

Reboot and wait a few minutes.

7 – verify Chrony is using PPS and NMEA

Now we can check what Chrony is using for sources with

chronyc sources # or if you want to watch it change as it happens watch -n 1 chronyc sources

Many people asked how to get both time/NMEA and PPS from a single GPS receiver (i.e. without a 3rd source) and this is how. The keys are the lock directive as well as the trust directive on the PPS source.

8 – results

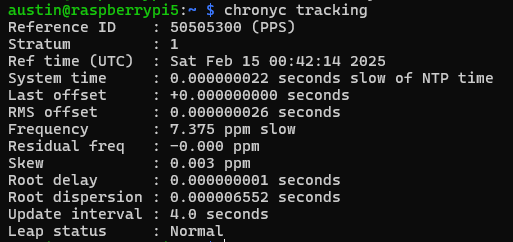

Check what chrony thinks of the system clock with

chronyc tracking

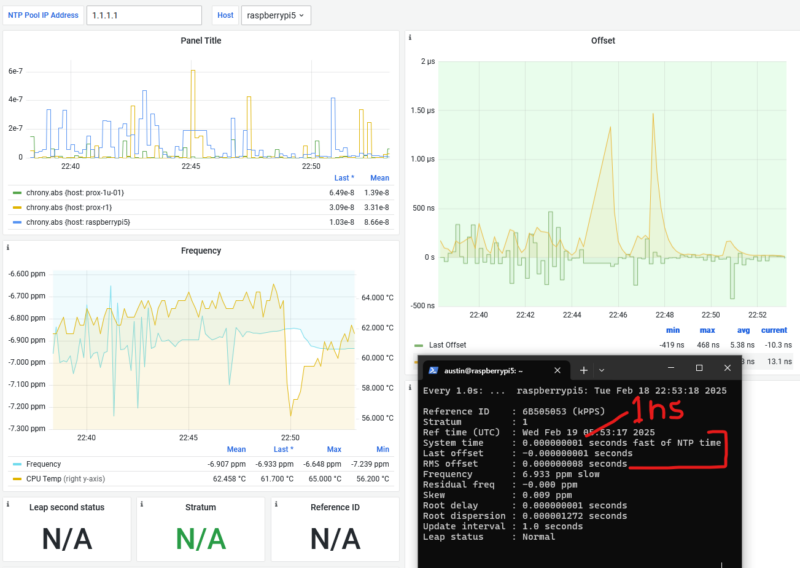

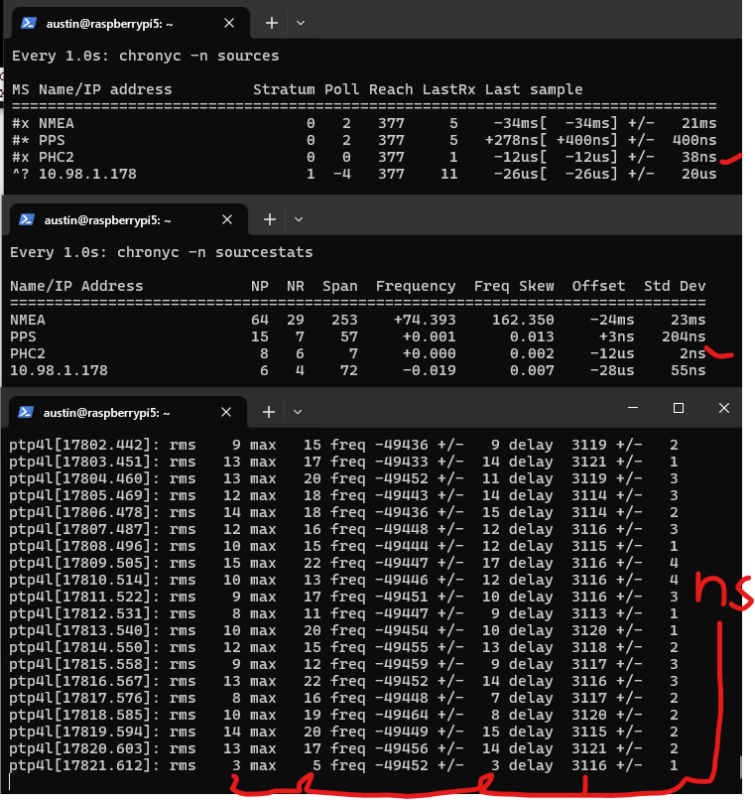

Here we see a few key items:

- System time – this is updated every second with what Chrony thinks the time vs what the system time is, we are showing 64 nanoseconds

- Last offset – how far off the system clock was at last update (from whatever source is selected). I got lucky with this capture, which shows 0 nanoseconds off

- RMS offset – a long term average of error. I expect this to get to low double-digit nanoseconds. Decreasing further is the topic of the next post.

- Frequency – the drift of the system clock. This number can kind of be whatever, as long as it is stable, but the closer to zero, the better. There is always a temperature correlation with the oscillator temperature vs frequency. This is what chrony is constantly correcting.

- Residual frequency – difference from what the frequency is and what it should be (as determined by the selected source)

- Skew – error in the frequency – lower is better. Less than 0.05 is very stable.

- Root delay/dispersion – basically how far from the “source” of truth your chrony is

- Update interval – self explanatory

9 – Grafana dashboard showing Chrony stats

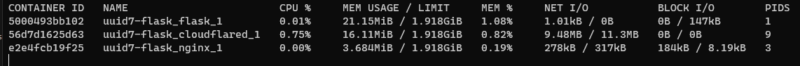

And to track the results over time, I feed the Chrony data to InfluxDB via Telegraf. Another topic for a future post. The dashboard looks like this:

Here we can see a gradual increase in the frequency on the Raspberry Pi 5 system clock. The offsets are almost always within 1 microsecond, with average of 16.7 nanoseconds. The spikes in skew correspond to the spikes in offsets. Something is happening on the Pi to probably spike CPU loading (even though I have the CPU throttled to powersave mode), which speeds things up and affects the timing via either powerstate transitions or oscillator temperature changes or both.

Conclusion

In 2025, a GPS sending PPS to Raspberry Pi is still a great way to get super accurate time. In this Chrony config, I showed how to get time of day, as well as precision seconds without an external source. Our offsets are well under one microsecond.

In the next post, we will examine how to maximize the performance (by minimizing the frequency skew!) of our Raspberry Pi/PPS combination – World’s Most Stable Raspberry Pi? 81% Better NTP with Thermal Management.

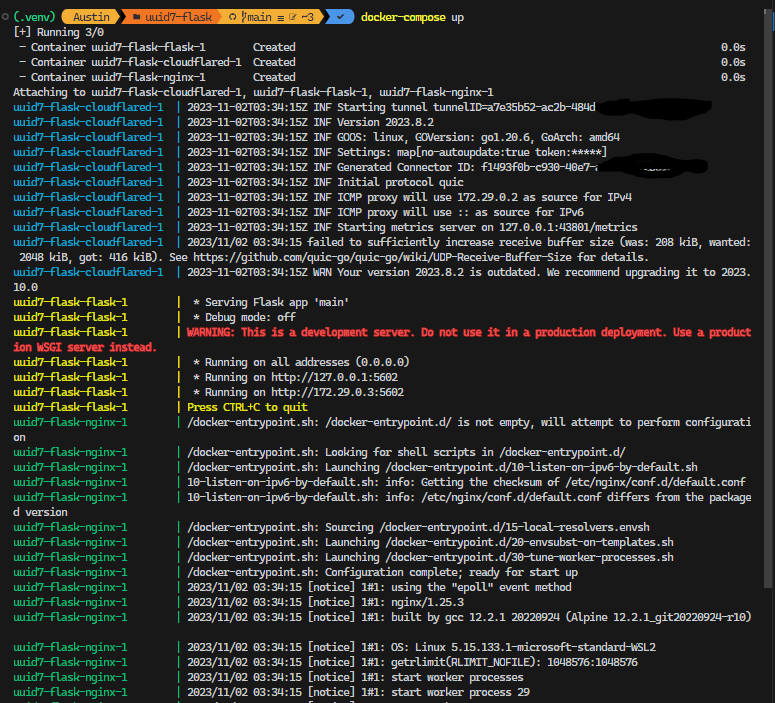

And for the post after that – here’s a preview of using PTP from an Oscilloquartz OSA 5401 SyncPlug. Note the standard deviations and offsets. This device has a OCXO – oven controlled crystal oscillator – that has frequency stability measured in ppb (parts per billion). It also has a NEO-M8T timing chip, the same one I mentioned in the beginning of this post.

The OSA 5401 SyncPlug is quite difficult to come by (I scored mine for $20 – shoutout to the servethehome.com forums! this device likely had a list price in the thousands) so I’ll also show how to just set up a PTP grandmaster (that’s the official term) on your Raspberry Pi.

Next Steps

- Document commands to set ublox module to 16 Hz timepulses

- Document commands to set ublox to survey-in upon power on

- Document commands to set ublox to use GPS + Beidou + Galileo

- Document Chrony config to use 16 Hz timepulses

- Configure Pi to use performance CPU governor to eliminate CPU state switch latency – https://austinsnerdythings.com/2025/11/24/worlds-most-stable-raspberry-pi-81-better-ntp-with-thermal-management/

- Telegraf/InfluxDB/Grafana configuration for monitoring

- Temperature-controlled enclosure