Background for why I wanted to make a Proxmox Ubuntu cloud-init image

I have recently ventured down the path of attempting to learn CI/CD concepts. I have tried docker multiple times and haven’t really enjoyed the nuances any of the times. To me, LXC/LXD containers are far easier to understand than Docker when coming from a ‘one VM per service’ background. LXC/LXD containers can be assigned IP addresses (or get them from DHCP) and otherwise behave basically exactly like a VM from a networking perspective. Docker’s networking model is quite a bit more nuanced. Lots of people say it’s easier, but having everything run on ‘localhost:[high number port]’ doesn’t work well when you’ve got lots of services, unless you do some reverse proxying, like with Traefik or similar. Which is another configuration step.

It is so much easier to just have a LXC get an IP via DHCP and then it’s accessible from hostname right off the bat (I use pfSense for DHCP/DNS – all DHCP leases are entered right into DNS). Regardless, I know Kubernetes is the new hotness so I figured I need to learn it. Every tutorial says you need a master and at least two worker nodes. No sense making three separate virtual machines – let’s use the magic of virtualization and clone some images! I plan on using Terraform to deploy the virtual machines for my Kubernetes cluster (as in, I’ve already used this Proxmox Ubuntu cloud-init image to make my own Kubernetes nodes but haven’t documented it yet).

Overview

The quick summary for this tutorial is:

- Download a base Ubuntu cloud image

- Install some packages into the image

- Create a Proxmox VM using the image

- Convert it to a template

- Clone the template into a full VM and set some parameters

- Automate it so it runs on a regular basis (extra credit)?

- ???

- Profit!

Youtube Video Link

If you prefer video versions to follow along, please head on over to https://youtu.be/1sPG3mFVafE for a live action video of me creating the Proxmox Ubuntu cloud-init image and why we’re running each command.

#1 – Downloading the base Ubuntu image

Luckily, Ubuntu (my preferred distro, guessing others do the same) provides base images that are updated on a regular basis – https://cloud-images.ubuntu.com/. We are interested in the “current” release of Ubuntu 20.04 Focal, which is the current Long Term Support version. Further, since Proxmox uses KVM, we will be pulling that image:

wget https://cloud-images.ubuntu.com/focal/current/focal-server-cloudimg-amd64.img

#2 – Install packages

It took me quite a while into my Terraform debugging process to determine that qemu-guest-agent wasn’t included in the cloud-init image. Why it isn’t, I have no idea. Luckily there is a very cool tool that I just learned about that enables installing packages directly into a image. The tool is called virt-customize and it comes in the libguestfs-tools package (“libguestfs is a set of tools for accessing and modifying virtual machine (VM) disk images” – https://www.libguestfs.org/).

Install the tools:

sudo apt update -y && sudo apt install libguestfs-tools -y

Then install qemu-guest-agent into the newly downloaded image:

sudo virt-customize -a focal-server-cloudimg-amd64.img --install qemu-guest-agent

At this point you can presumably install whatever else you want into the image but I haven’t tested installing other packages. qemu-guest-agent was what I needed to get the VM recognized by Terraform and accessible.

Update 2021-12-30 – it is possible to inject the SSH keys into the cloud image itself before turning it into a template and VM. You need to create a user first and the necessary folders:

# not quite working yet. skip this and continue #sudo virt-customize -a focal-server-cloudimg-amd64.img --run-command 'useradd austin' #sudo virt-customize -a focal-server-cloudimg-amd64.img --run-command 'mkdir -p /home/austin/.ssh' #sudo virt-customize -a focal-server-cloudimg-amd64.img --ssh-inject austin:file:/home/austin/.ssh/id_rsa.pub #sudo virt-customize -a focal-server-cloudimg-amd64.img --run-command 'chown -R austin:austin /home/austin'

#3 – Create a Proxmox virtual machine using the newly modified image

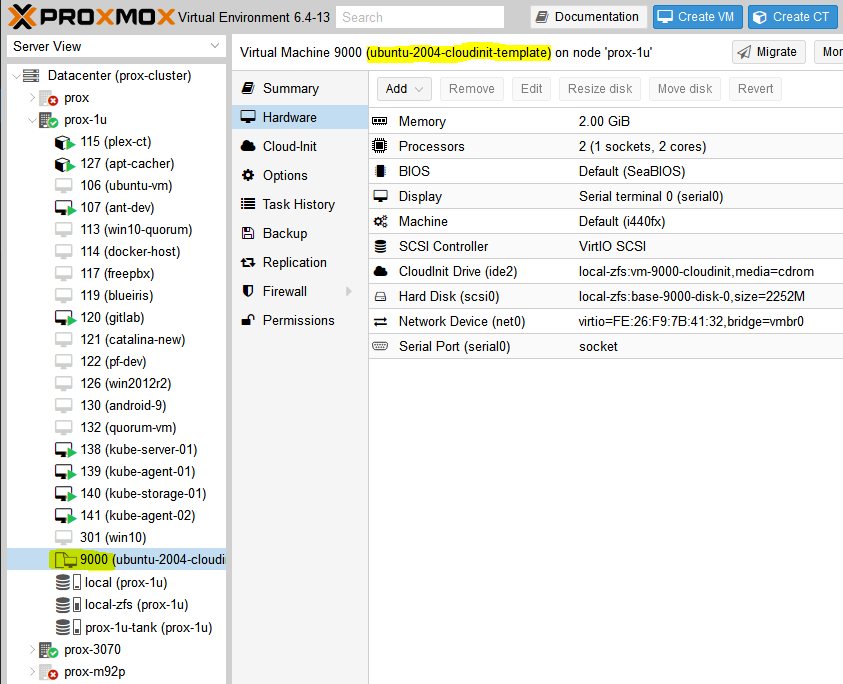

The commands here should be relatively self explanatory but in general we are creating a VM (VMID=9000, basically every other resource I saw used this ID so we will too) with basic resources (2 cores, 2048MB), assigning networking to a virtio adapter on vmbr0, importing the image to storage (your storage here will be different if you’re not using ZFS, probably either ‘local’ or ‘local-lvm’), setting disk 0 to use the image, setting boot drive to disk, setting the cloud init stuff to ide2 (which is apparently appears as a CD-ROM to the VM, at least upon inital boot), and adding a virtual serial port. I had only used qm to force stop VMs before this but it’s pretty useful.

sudo qm create 9000 --name "ubuntu-2004-cloudinit-template" --memory 2048 --cores 2 --net0 virtio,bridge=vmbr0 sudo qm importdisk 9000 focal-server-cloudimg-amd64.img local-zfs sudo qm set 9000 --scsihw virtio-scsi-pci --scsi0 local-zfs:vm-9000-disk-0 sudo qm set 9000 --boot c --bootdisk scsi0 sudo qm set 9000 --ide2 local-zfs:cloudinit sudo qm set 9000 --serial0 socket --vga serial0 sudo qm set 9000 --agent enabled=1

You can start the VM up at this point if you’d like and make any other changes you want because the next step is converting it to a template. If you do boot it, I will be completely honest I have no idea how to log into it. I actually just googled this because I don’t want to leave you without an answer – looks like you can use the same virt-customize we used before to set a root password according to stackoverflow (https://stackoverflow.com/questions/29137679/login-credentials-of-ubuntu-cloud-server-image). Not going to put that into a command window here because cloud-init is really meant for public/private key authentication (see post here for a quick SSH tutorial).

#4 – Convert VM to a template

Ok if you made any changes, shut down the VM. If you didn’t boot the VM, that’s perfectly fine also. We need to convert it to a template:

sudo qm template 9000

And now we have a functioning template!

#5 – Clone the template into a full VM and set some parameters

From this point you can clone the template as much as you want. But, each time you do so it makes sense to set some parameters, namely the SSH keys present in the VM as well as the IP address for the main interface. You could also add the SSH keys with virt-customize but I like doing it here.

First, clone the VM (here we are cloning the template with ID 9000 to a new VM with ID 999):

sudo qm clone 9000 999 --name test-clone-cloud-init

Next, set the SSH keys and IP address:

sudo qm set 999 --sshkey ~/.ssh/id_rsa.pub sudo qm set 999 --ipconfig0 ip=10.98.1.96/24,gw=10.98.1.1

It’s now ready to start up!

sudo qm start 999

You should be able to log in without any problems (after trusting the SSH fingerprint). Note that the username is ‘ubuntu’, not whatever the username is for the key you provided.

ssh [email protected]

Once you’re happy with how things worked, you can stop the VM and clean up the resources:

sudo qm stop 999 && sudo qm destroy 999 rm focal-server-cloudimg-amd64.img

#6 – automating the process

I have not done so yet, but if you create VMs on a somewhat regular basis, it wouldn’t be hard to stick all of the above into a simple shell script (update 2022-04-19: simple shell script below) and run it via cron on a weekly basis or whatever frequency you prefer. I can’t tell you how many times I make a new VM from whatever .iso I downloaded and the first task is apt upgrade taking forever to run (‘sudo apt update’ –> “176 packages can be upgraded”). Having a nice template always ready to go would solve that issue and would frankly save me a ton of time.

#6.5 – Shell script to create template

# installing libguestfs-tools only required once, prior to first run sudo apt update -y sudo apt install libguestfs-tools -y # remove existing image in case last execution did not complete successfully rm focal-server-cloudimg-amd64.img wget https://cloud-images.ubuntu.com/focal/current/focal-server-cloudimg-amd64.img sudo virt-customize -a focal-server-cloudimg-amd64.img --install qemu-guest-agent sudo qm create 9000 --name "ubuntu-2004-cloudinit-template" --memory 2048 --cores 2 --net0 virtio,bridge=vmbr0 sudo qm importdisk 9000 focal-server-cloudimg-amd64.img local-zfs sudo qm set 9000 --scsihw virtio-scsi-pci --scsi0 local-zfs:vm-9000-disk-0 sudo qm set 9000 --boot c --bootdisk scsi0 sudo qm set 9000 --ide2 local-zfs:cloudinit sudo qm set 9000 --serial0 socket --vga serial0 sudo qm set 9000 --agent enabled=1 sudo qm template 9000 rm focal-server-cloudimg-amd64.img echo "next up, clone VM, then expand the disk" echo "you also still need to copy ssh keys to the newly cloned VM"

#7-8 – Using this template with Terraform to automate VM creation

Next post – How to deploy VMs in Proxmox with Terraform

References

https://matthewkalnins.com/posts/home-lab-setup-part-1-proxmox-cloud-init/

https://registry.terraform.io/modules/sdhibit/cloud-init-vm/proxmox/latest/examples/ubuntu_single_vm

My original notes

https://matthewkalnins.com/posts/home-lab-setup-part-1-proxmox-cloud-init/ https://registry.terraform.io/modules/sdhibit/cloud-init-vm/proxmox/latest/examples/ubuntu_single_vm # create cloud image VM wget https://cloud-images.ubuntu.com/focal/20210824/focal-server-cloudimg-amd64.img sudo qm create 9000 --name "ubuntu-2004-cloudinit-template" --memory 2048 --cores 2 --net0 virtio,bridge=vmbr0 # to install qemu-guest-agent or whatever into the guest image #sudo apt-get install libguestfs-tools #virt-customize -a focal-server-cloudimg-amd64.img --install qemu-guest-agent sudo qm importdisk 9000 focal-server-cloudimg-amd64.img local-zfs sudo qm set 9000 --scsihw virtio-scsi-pci --scsi0 local-zfs:vm-9000-disk-0 sudo qm set 9000 --boot c --bootdisk scsi0 sudo qm set 9000 --ide2 local-zfs:cloudinit sudo qm set 9000 --serial0 socket --vga serial0 sudo qm template 9000 # clone cloud image to new VM sudo qm clone 9000 999 --name test-clone-cloud-init sudo qm set 999 --sshkey ~/.ssh/id_rsa.pub sudo qm set 999 --ipconfig0 ip=10.98.1.96/24,gw=10.98.1.1 sudo qm start 999 # remove known host because SSH key changed ssh-keygen -f "/home/austin/.ssh/known_hosts" -R "10.98.1.96" # ssh in ssh -i ~/.ssh/id_rsa [email protected] # stop and destroy VM sudo qm stop 999 && sudo qm destroy 999

35 replies on “How to create a Proxmox Ubuntu cloud-init image”

[…] A Kubernetes cluster requires at least 3 VMs/bare metal machines. In my last post, I wrote about how to create a Ubuntu cloud-init template for Proxmox. In this post, we’ll take that template and use it to deploy a couple VMs via automation […]

#3 – sudo qm set 9000 –agent 1

Added qemu-guest-agent to the image in #2, might as well enable it.

I must have qemu-guest-agent enabled by default somewhere because they all have it already. Regardless, this is a good idea so I’ll add it. Thanks for the comment!

Well you did enable it in the Terraform post:

agent = 1

Great series, enjoying this.

Great posts, however I made some observations myself that I wanted to share:

You can indeed boot the vm and install custom packages, however if you boot the vm the first it gets a machine-id assigned (/etc/machine-id) which is used for dhcp request (at least with opnsense in my case), therefore if you clone the machine id stays the same and you get the same ip. After all thats the reason why I need searched on how to add guest agent without booting it first. Maybe you can reset the machine id, has probably something todo with the cloud-init first boot stage, but haven’t further investigated because installing guest-agent was enough for me.

Secondly if you do want to boot the vm and login you can provide a password in the vms cloud-init tab in proxmox ui, because the cloud img has cloud init enabled. Might be useful for quick debugging.

I have installed libeguestfs on Proxmox but when running below command:

virt-customize -a focal-server-cloudimg-amd64.img –install qemu-guest-agent

Getting below error:

root@homeserver:~# virt-customize -a focal-server-cloudimg-amd64.img –install qemu-guest-agent

[ 0.0] Examining the guest …

virt-customize: error: libguestfs error: /usr/bin/qemu-system-x86_64 killed

by signal 11 (Segmentation fault).

To see full error messages you may need to enable debugging.

Do:

export LIBGUESTFS_DEBUG=1 LIBGUESTFS_TRACE=1

and run the command again. For further information, read:

http://libguestfs.org/guestfs-faq.1.html#debugging-libguestfs

You can also run ‘libguestfs-test-tool’ and post the *complete* output

into a bug report or message to the libguestfs mailing list.

If reporting bugs, run virt-customize with debugging enabled and include

the complete output:

virt-customize -v -x […]

root@homeserver:~#

Looks like a bug with the current versions of things:

https://forum.proxmox.com/threads/guestmount-resulting-in-a-qemu-segfault-since-the-last-updates.99665/

https://bugzilla.proxmox.com/show_bug.cgi?id=3728

# apt install –no-install-recommends –no-install-suggests libguestfs-tools

Hi, great article, I’m learning tons.

One little thing I noticed is the “qm set … –scsi0” is wrong.

I had to do this instead:

qm set 9000 –scsihw virtio-scsi-pci –scsi0 ext8tb1:9000/vm-9000-disk-0.raw

Obviously, my storage is different, but I had to add the path to full file as reported by the importdisk command

Oh, maybe it’s the version of promox I’m using? Mine is 7.1-7 .

It’s the storage type you used. I literally ran through these commands a few days ago and everything worked fine for me. I even adjusted the agent command to fix an issue.

Here’s my bash history list that worked:

29 sudo qm create 9000 –name “ubuntu-2004-cloudinit-template” –memory 2048 –cores 2 –net0 virtio,bridge=vmbr0

30 sudo qm importdisk 9000 focal-server-cloudimg-amd64.img local-zfs

31 sudo qm set 9000 –scsihw virtio-scsi-pci –scsi0 local-zfs:vm-9000-disk-0

32 sudo qm set 9000 –boot c –bootdisk scsi0

33 sudo qm set 9000 –ide2 local-zfs:cloudinit

34 sudo qm set 9000 –serial0 socket –vga serial0

This was on proxmox-ve 7.1-1, with qemu-server 7.1-4.

Thanks for your tutorial.

Until my update to the latest version, a template no longer works. No HDD is created. In the template there is only one unused disk but I think that was normal.

Can you confirm that it does not work with the latest version of Proxmox?

Worked fine for me a couple weeks ago with Proxmox 7.1-1

Clearly you are already very aware of the HashiCorp tools, so why not just use Packer instead of all this command-line stuff. You can install the qemu-guest-agent (and any other packages you want to bake in – keeping in mind the trade offs between bake vs. fry) in cloud-init. Automation is really the best approach to repeatable builds right ?, especially when the implementation is a ‘hands free’ standard IaC pattern. One simple ‘packer build host.json’ command, and shortly thereafter a freshly minted Proxmox template. Then by all means use Terraform and friends (Vault, scripting, whatever) for the final mile processing at launch time (fry) ?

I actually have not heard of Packer until your comment. I will look into it!

You tutorial has been very useful! Thank you.

I wish Proxmox had exposed an API for “qm importdisk”, everything could have been done with Packer/Terraform. Because of that missing functionality one has to interact with native “qm” commands.

“`

virt-sysprep -a focal-server-cloudimg-amd64.img

“`

This will resolve duplicate DHCP IP addresses by resetting the machine-id along with other clean ups.

Hi Austin,

First, congrats for this post and the series.

I followed the steps creating two templates and correctly instantiated two VMS, in my case I used (Rocky and Ubuntu cloud images).

When I start the machines, I noticed that the VMs doesn’t boot at all, receiving a Probing EDD message on the boot of the Rocky machine, and a unsuccesful boot on the Ubunut machine.

I noticed that the Display is configured for the Serial Terminal, which I undestand that is for connecting in the future by ipmitool, but, did you installed any other agent or driver to support for example the Spice agent and the compatibility with this option on the Proxmox configuration?

Thanks for your dedication!

Hi there, I have not seen that error message before, for any VM. I didn’t install any other driver/agent. Everything just worked for me. Did you watch the YouTube video that goes with this tutorial? It shows everything from beginning to end.

Hi Austin,

I followed the steps posted on this blog entry. Let me follow the video to check that I followed fine all the steps, and in case that would not work, I will check using the same versions of Ubuntu as you. I was using a more updated release.

Regards!

Hi Austin,

Solved, as my storage is different than yours there are some commands that are slightly different.

Now I’ve created Ubuntu 2204, 2004 and Rocky 8.5 without problems.

Thanks for your posts.

I’m seeing a error that sounds similar to yours. Would you be able to post your different commands?

[…] like to give credit where credit is due. This post and the contents have been inspired by Austin @ AustinsNerdyThings.com and another article at […]

Very nice article. I used your example of generating a user and modified it to create a working user:

# Use mkpasswd -m SHA-512 to create a password for the usermod -p command,

# ensure to escape any $ chars to avoid variable substitution:

virt-customize -a jammy-server-cloudimg-amd64.img –run-command ‘useradd -m viscous -s /bin/bash’

virt-customize -a jammy-server-cloudimg-amd64.img –run-command ‘usermod -aG adm,sudo,dialout,cdrom,floppy,audio,dip,video,plugdev,netdev,lxd viscous’

virt-customize -a jammy-server-cloudimg-amd64.img –run-command “usermod -p ‘<ENCRYPTED PASSWORD HERE WITH \ ESCAPED $ CHARS' viscous"

virt-customize -a jammy-server-cloudimg-amd64.img –run-command 'mkdir -p /home/viscous/.ssh'

virt-customize -a jammy-server-cloudimg-amd64.img –ssh-inject viscous:file:/root/id_rsa.pub

virt-customize -a jammy-server-cloudimg-amd64.img –run-command 'chown -R viscous. /home/viscous'

Threw this together so I can create templates from cloud images and automate the process. Sloppy but it works. Feel free to jack parts of it and edit to your environment.

#!/bin/bash

#preface and iso directory listing

clear

iso_dir=”/mnt/pve/LocalNas/template/iso/”

iso_list=$(ls $iso_dir)

vm_list=$(pvesh get /cluster/resources –type vm)

echo “Before starting, the image you want to use must be uploaded to $iso_dir . This can be done in the ui! Currently loaded isos listed below.”

echo

echo

echo “$iso_list”

echo

echo

read -r -s -p $’Press enter to continue, or ctrl+c to quit…’

echo

echo

clear

#ask for number to assign vm

echo “What would you like the vmid to be? Please select a unique number. Currently in use vmids listed below.”

echo

echo

echo “$vm_list”

echo

echo

read -p “vmid: ” vmid

clear

echo

echo

#ask what you would like to name the vm

echo “What would you like to name this vm? Choose something that can easily identify the machine”

echo

echo

echo

read -p “vm name: ” vmname

echo

echo

echo

clear

#ask user to pick image

PS3=”Please choose an image from the list: ”

select image in $iso_list

do

echo “You chose: $image”

break

done

echo

echo

#How much RAM

echo “How many gb of Ram would you like to use?”

echo

echo

read -p “Ram in GB: ” ram

echo

echo

gigs=$(($ram * 1024))

#cores

echo “How many cpu cores would you like to use?”

echo

echo

read -p “Number of CPU cores: ” cores

echo

echo

#network

PS3=”which net would you like to use? vmbr0 – 20 subnet vmbr1 – lan subnet: ”

select net in vmbr0 vmbr1

do

echo “You chose: $net”

break

done

echo

echo

#add serial terminal if ubuntu

y=”qm set $vmid –serial0 socket –vga serial0″

echo “Is this an ubuntu image? y/n”

echo

read -p “y/n : ” ubuntu

echo

echo

virt-customize -a “$iso_dir””$image” –install qemu-guest-agent

qm create “$vmid” –name “$vmname” –memory “$gigs” –cores “$cores” –net0 virtio,bridge=”$net”

qm importdisk “$vmid” “$iso_dir””$image” LocalNas

qm set “$vmid” –scsihw virtio-scsi-pci –scsi0 LocalNas:”$vmid”/vm-“$vmid”-disk-0.raw

qm set “$vmid” –boot c –bootdisk scsi0

qm set “$vmid” –ide2 LocalNas:cloudinit

qm set “$vmid” –agent enabled=1

$ubuntu

Hi,

is there a way to change disk size (which is only 2250MB) during VM creation?

Yes, using the qm resize command:

qm resize [vmid] [disk] [size]

qm resize 100 virtio0 +5G

https://pve.proxmox.com/wiki/Resize_disks

[…] a new VM to transfer this very blog to my shiny, new co-located server. I was going to use the Ubuntu 20.04 Proxmox Cloud Init Image Script template I wrote up a bit ago. Then I stopped and realized I should update it. So I changed 2004 everywhere […]

Thanks! it works! I only have an issue related with the disk size, the vm doesn’t work hanging on “starting serial terminal on interface”, couldn’t ssh to it (connection refused), looking better I found that it was because of the disk size was too small, so I increased it with `sudo qm resize 721 scsi0 +2G` if anyone is getting the same problem give it a try. Great post, thanks again!

Nice troubleshooting and fix! I’ve definitely forgot to increase the disk size a couple times and it’s bit me pretty quick.

#!/usr/bin/env bash

create_clone(){

qm clone 9000 999 –name test-clone-cloud-init

qm set 999 –sshkey ~/.ssh/id_rsa.pub

qm set 999 –ipconfig0 ip=6.6.6.25/24,gw=6.6.6.1

qm start 999

}

create_template() {

virt-customize -a focal-server-cloudimg-amd64.img –install qemu-guest-agent

virt-customize -a focal-server-cloudimg-amd64.img –run-command ‘useradd -ms /bin/bash zac’

virt-customize -a focal-server-cloudimg-amd64.img –run-command ‘mkdir -p /home/zac/.ssh’

virt-customize -a focal-server-cloudimg-amd64.img –ssh-inject zac:file:/home/zac/.ssh/id_rsa.pub

virt-customize -a focal-server-cloudimg-amd64.img –run-command ‘chown -R zac:zac /home/zac’

virt-customize -a focal-server-cloudimg-amd64.img –run-command ‘echo “zac:changemenow” | chpasswd’

qm create 9000 –name “ubuntu-cloudinit-template” –memory 2048 –cores 2 –net0 virtio,bridge=vmbr0

qm importdisk 9000 focal-server-cloudimg-amd64.img local-lvm

qm set 9000 –scsihw virtio-scsi-pci –scsi0 local-lvm:vm-9000-disk-0

qm set 9000 –boot c –bootdisk scsi0

qm set 9000 –ide2 local-lvm:cloudinit

qm set 9000 –serial0 socket –vga serial0

qm set 9000 –agent enabled=1

qm template 9000

}

remove_img(){

[[ -f focal-server-cloudimg-amd64.img ]] && rm -f focal-server-cloudimg-amd64.img

}

main() {

if qm status 9000 &> /dev/null; then

qm stop 9000

qm destroy 9000

fi

remove_img

wget https://cloud-images.ubuntu.com/focal/current/focal-server-cloudimg-amd64.img

create_template

create_clone

remove_img

}

main

Just wanted to say thanks, I’ve referenced this post a number of times and it has been very helpful!

you are welcome! I referenced it yesterday too actually! There is an updated script for Ubuntu 22.04 in case you haven’t seen it – Proxmox Ubuntu 22.04 Jammy LTS Cloud-init Image Script

[…] was a neat trick I saw on Create ProxMox Ubuntu Image that I integrated into my […]

[…] was a neat trick I saw on Create ProxMox Ubuntu Image that I integrated into my […]